Facebook Emotional Contagion Experiment

Part of a series on Facebook / Meta. [View Related Entries]

This submission is currently being researched & evaluated!

You can help confirm this entry by contributing facts, media, and other evidence of notability and mutation.

Overview

The Facebook Emotional Contagion Experiment was a psychological study conducted by a group of researchers from Facebook and Cornell University to test whether an emotional bias in the newsfeed content of a Facebook user can affect his/her own emotional state. Upon its publication in June 2014, the paper was criticized for toying with Facebook users’ emotions without their consent and for failing to gain prior approval from the Cornell ethics committee.

Background

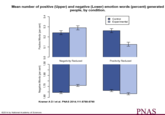

On June 17th, 2014, the Proceedings of the National Academy of Sciences[1] (PNAS) published a paper titled "Experimental evidence of massive-scale emotional contagion through social networks" authored by Adam D. I. Kramer of Facebook's Core Data Science Team, Jamie E. Guillory of the Center for Tobacco Control Research and Education at the University of California and Jeffery T. Hancock from the Departments of Communication and Information Science at Cornell University. As part of the experiment, Facebook data scientists altered random users' news feed algorithms by skewing the number of positive or negative terms to see if people would respond with increasingly negative or positive status updates of their own. The study found that increased exposure to specific terms resulted in increased expression of that same type.

"when positive expressions were reduced, people produced fewer positive posts and more negative posts; when negative expressions were reduced, the opposite pattern occurred. These results indicate that emotions expressed by others on Facebook influence our own emotions, constituting experimental evidence for massive-scale contagion via social networks."

Notable Developments

News Media Coverage

On June 26th, the science and technology magazine New Scientist[4] published an article reporting on the findings of the experiment. In the coming days, other news sites published articles about the experiment and the growing public backlash, including International Business Times,[9] The Telegraph,[10] The Guardian,[11] RT,[12] The Washington Post,[13] Business Insider,[14] Salon,[15] AV Club,[16 Forbes[17] and the BBC.[18]

Online Reaction

On June 27th, OpenNews director of content Erin Kissane posted a tweet[7] urging her followers to delete their Facebook accounts and for Facebook employees to quit their jobs (shown below).

On June 28th, Redditor hazysummersky submitted a link to an AV Club[16] article about the experiment to the /r/technology[3] subreddit, where it gained over 3,500 points and 1,300 comments in the first 72 hours. That same day, CEO of the Department of Better Technology Clay Johnson tweeted[8] that he found the Facebook experiment "terrifying" (shown below).

Ethics Committee Approval Controversy

On June 28th, 2014, The Atlantic quoted PNAS editor Susan Fiske, who claimed she was concerned about the study but was told that the author's "local institutional review board had approved it." On June 29th, Forbes[6] published an article about the ethics review controversy, which pointed out part of Facebook's data use policy revealing that the company may use user data for "internal operations, including troubleshooting, data analysis, testing, research and service improvement" (shown below).

On June 30th, Cornell University[5] published a statement indicating that since the research was conducted independently, the university's review board had deemed it unnecessary for review.

"Because the research was conducted independently by Facebook and Professor Hancock had access only to results – and not to any individual, identifiable data at any time – Cornell University’s Institutional Review Board concluded that he was not directly engaged in human research and that no review by the Cornell Human Research Protection Program was required."

Adam Kramer's Apology

On June 29th, Kramer posted a Facebook[2] status update explaining the methodology behind the experiment an apologizing for any anxiety caused by the paper. In the first 48 hours, the post gathered more than 750 likes and 140 comments.

Search Interest

Not available.

External References

[1] PNAS – Experimental evidence of massive-scale emotional contagion through social networks

[2] Facebook – Adam Kramer

[3] Reddit – Facebook tinkered with users’ feeds for a massive psychology experiment

[4] New Scientist – Even online emotions can be contagious

[5] Cornell University – Media statement on Cornell Universitys role in Facebook

[6] Forbes – Facebook Doesnt Understand the Fuss

[9] IBI Times – Is Facebooks Emotional Contagion Experiment Really That Big a Deal?

[10] The Telegraph – Facebook conducted secret psychology experiment

[11] The Guardian – Facebook reveals news feed experiment to control emotions

[12] RT – Facebook manipulated users emotions as part of psychological experiment

[13] The Washington Post – Facebook responds to criticism of its experiment on users

[14] Business Insider – Facebook Ran a Huge Psychological Experiment on Users

[15] Salon – Why the everybody does it defense of Facebooks emotional manipulation experiment is bogus

[16] AV Club – Facebook tinkered with users feeds for massive psychology experiment

[17] Forbes – Facebook Manipulated User News Feeds to Create Emotional Responses

Recent Videos

There are no videos currently available.

Top Comments

Wisehowl

Jul 01, 2014 at 07:07PM EDT

TripleA9000

Jul 01, 2014 at 07:06PM EDT